Enterprise data platforms have modernized storage, compute, and analytics. Yet the most fragile layer of the stack remains unchanged: data ingestion.

Across industries — particularly in financial asset management, portfolio reporting, and distributed operating models — enterprises struggle not with data accuracy, but with structural inconsistency.

- Reports arrive from multiple operators.

- Schemas vary.

- Column names drift.

- Formats change subtly.

- KPIs evolve without synchronized governance.

Traditional ETL (Extract–Transform–Load) systems were never designed for this level of structural variability. They assume stable schemas, predictable contracts, and tightly controlled upstream systems.

That assumption no longer reflects enterprise reality.

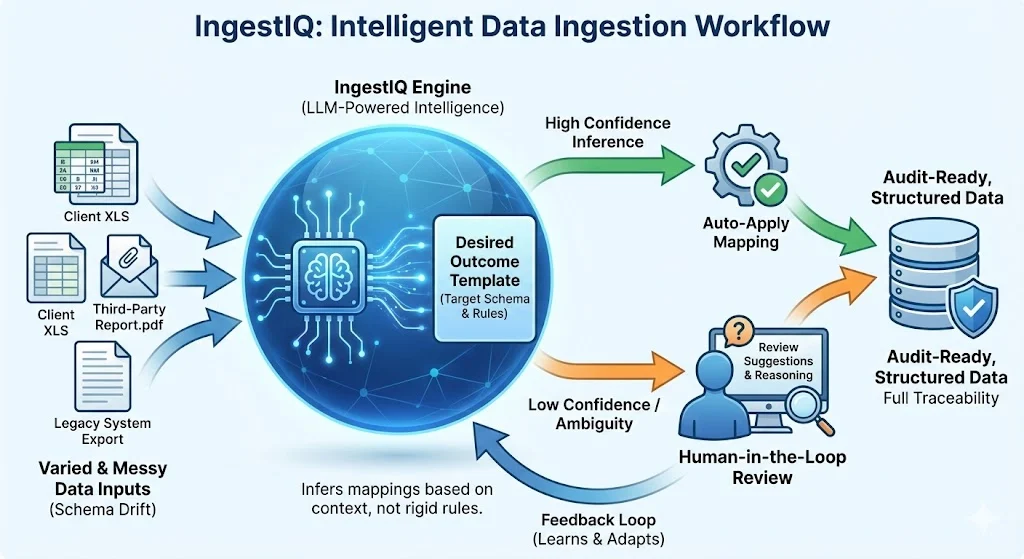

IngestIQ Agent introduces a new ingestion paradigm:

- Intent-first architecture

- LLM-powered semantic reasoning

- Confidence-based automation

- Human-in-the-loop governance

- Audit-ready lineage by design

Rather than coding transformations for every variation, IngestIQ defines what compliant data should look like — and intelligently infers how to achieve it.

This is not replacing ETL. It is replacing pipeline fragility with governed intelligence.

The Enterprise Data Landscape Has Changed: From Systems to Ecosystems

The central challenge of modern data engineering has shifted. In the past, data was moved between internal, controlled systems. Today, enterprises must ingest data from vast, uncontrolled ecosystems.

The ingestion layer is no longer just a technical pipe; it is a boundary that must reconcile extreme structural variability from:

- External Operators: Financial reports submitted in disparate formats.

- Heterogeneous ERPs: Portfolio companies running on non-standardized software.

- Third-Party Partners: Constantly evolving schemas that trigger pipeline failures.

- Manual Inputs: Spreadsheets and semi-structured documents where "data entry" is an art, not a science.

The Problem: Correct Data, Wrong Structure

In a distributed asset management environment, the "truth" is often buried under surface-level inconsistency. Consider how a single metric like Revenue or Operating Cost might arrive:

The fundamental issue is that the numbers are correct, but the structure is inconsistent.

Traditional ETL depends entirely on structure to function. When the structure drifts, the pipeline breaks—not because the data is wrong, but because the "contract" between the source and the destination was too rigid to adapt.

Introducing IngestIQ Agent: The Semantic Ingestion Engine

IngestIQ Agent is a GenAI-driven architecture engineered to replace brittle, hard-coded transformation logic with LLM-powered semantic intelligence.

Traditional systems fail because they require a "perfect match" between source and destination. IngestIQ shifts the burden of understanding from the developer to the agent. Instead of forcing every external source into a predefined template, the Agent interprets the data's meaning in real-time.

Core Capabilities:

- Dynamic Structural Inference: Automatically identifies the relationship between source columns and target requirements, even when naming conventions drift.

- Contextual Intent Interpretation: Understands that "Net" in a hotel report and "Bottom Line" in a maritime statement represent the same financial concept.

- Intelligent Format Normalization: Seamlessly converts complex strings, currencies, and date formats into standardized, machine-ready values.

- Confidence-Gated Automation: Executes transformations based on a tunable confidence score—automating the obvious and flagging the ambiguous.

- Immutable Governance: Maintains a full audit trail of every semantic decision, ensuring that AI-driven insights remain transparent and regulator-ready.

IngestIQ does not remove validation; it removes rigidity. It ensures that while your data pipelines become more flexible, your business rules remain absolute.

How IngestIQ Thinks: The Architecture of Intent

The fundamental difference between legacy systems and IngestIQ is a move from procedural execution to declarative outcomes.

From “Rules-First” to “Intent-First”

Traditional ETL is preoccupied with the how:

"How do I write a script to transform Column A into Field B?"

This creates a brittle chain of dependencies.

IngestIQ focuses on the what:

"What does a compliant, gold-standard record look like, and how does this source satisfy that intent?"

This shift is powered by the Desired Outcome Template, which serves as a dynamic contract defining:

- Canonical Schema: The required fields for the destination system.

- Semantic Data Types: The expected formats (e.g., ISO currency codes, standardized dates).

- Reference Samples: Real-world examples of "perfect" data that guide the LLM's reasoning.

A Practical Example: Portfolio Reporting Without Fragile Pipelines

Consider a financial asset manager consolidating monthly reports from a diverse portfolio of infrastructure assets: Hotels, Roads, and Ports. The underlying data is accurate, but the structural "drift" is extreme:

- Hotel Report: Uses

Rev_USD,Ops_Cost, and values like"USD 12.5M". - Road Report: Uses

RevenueandOperatingExpense. - Port Report: Uses

Total_RevenueandOpex.

The Traditional ETL Tax

In a rules-first world, this requires building and maintaining separate pipelines for every asset type. Any minor change in a spreadsheet—a renamed column or a new currency format—breaks the pipeline, requiring manual engineering intervention and re-testing.

The IngestIQ Advantage

IngestIQ utilizes Structured Semantic Inference to bypass manual mapping:

- Semantic Mapping: The LLM recognizes that

Rev_USD,Revenue, andTotal_Revenueall map to the canonicalRevenuefield. - Format Intelligence: It contextually converts

"12.5M"into the numeric value12500000. - Derived Reasoning: If a metric like EBITDA is missing, the system detects the relationship (

EBITDA = Revenue – Operating_Cost) and proposes a derived value. - Confidence Scoring: Every inference is assigned a probability score.

Confidence-Based Automation: Built for Production

IngestIQ is engineered for the rigors of enterprise production, where "black box" automation is a non-starter. We treat AI as a probabilistic engine wrapped in deterministic guardrails.

- Gated Automation: You set the "risk dial." High-confidence transformations (e.g., >95%) flow through automatically, while anything ambiguous is routed to a Human-in-the-Loop (HITL) dashboard.

- Explainable Lineage: Every data point carries its "biography"—showing the source file, the LLM’s reasoning for a specific mapping, and the human approval history.

- Audit-Ready Governance: IngestIQ preserves a full trail of intent and execution, making it suitable for regulated environments where transparency is as critical as throughput.

The Hybrid Advantage: What IngestIQ Replaces—and What It Preserves

IngestIQ is not a "rip-and-replace" for your entire data stack; it is a surgical upgrade for the most brittle part of it. By distinguishing between probabilistic reasoning (intelligence) and deterministic execution (rules), IngestIQ creates a more resilient, hybrid ingestion model.

The Shift: Legacy ETL vs. IngestIQ Agent

The Bedrock: What IngestIQ Does Not Replace

Engineering a production-ready system means knowing where AI should stop. IngestIQ honors the non-negotiable "Source of Truth" layers that define enterprise governance.

- Deterministic Reconciliations: Final "penny-perfect" rollups remain the domain of rigid arithmetic logic.

- Regulatory Validation: Compliance checks and audit trails are preserved exactly as dictated by policy.

- Financial Controls: Arithmetic verification and balance sheet integrity are never left to inference.

- Governance Frameworks: The system augments your existing security and access protocols rather than bypassing them.

The Result: IngestIQ handles the complexity of the world (variability, drift, semantics) so your deterministic systems can focus on the certainty of the business (compliance, math, rules).

Engineering for the Real World: A Production-Grade GenAI Architecture

Most AI initiatives stall because they lack the guardrails required for regulated industries. IngestIQ is engineered to solve for enterprise constraints, treating GenAI as a specialized component within a deterministic framework rather than a "black box" solution.

Bounded Intelligence

IngestIQ operates on the principle of Separation of Concerns:

- Semantic Reasoning: LLMs are used exclusively for interpreting context and intent.

- Numerical Integrity: Deterministic systems handle all arithmetic and numeric validation.

- Risk Mitigation: Confidence gating ensures that the system never "guesses" on critical data.

- Native Auditability: Lineage and reasoning are captured at the point of inference, not bolted on after the fact.

This is not experimental AI; it is governed intelligence applied precisely where rule-based systems fail.

The Architectural Shift: From Coding to Orchestration

The move to IngestIQ represents a fundamental change in how data teams operate.

- The Traditional Mindset: "How do we write and maintain custom code for every possible source variation?" This leads to an "Engineering Debt" that grows with every new asset.

- The IngestIQ Mindset: "How do we describe the desired outcome clearly enough for the system to infer the path?" By shifting from coding transformations to defining Outcome Templates, the ingestion layer becomes adaptive. The pipeline remains stable even as source variability increases, allowing your team to move from reactive maintenance to proactive orchestration.

Strategic Impact: The Leadership Perspective

For CIOs, CDOs, and Asset Management leaders, the transition to intelligent ingestion delivers measurable operational alpha:

- Efficiency: 60–80% reduction in manual data consolidation and analyst effort.

- Agility: Onboard new assets or data partners in hours, not weeks.

- Resilience: Drastically reduced operational risk from schema drift and format changes.

- Scalability: Decouple portfolio growth from headcount growth.

- Transparency: Achieve a superior audit posture with full "source-to-target" explainability.

Final Thought: The Evolution of Ingestion

ETL is not disappearing; it is evolving. The next generation of enterprise infrastructure will not be defined by rigid pipelines, but by intelligent agents operating within governed boundaries.

If traditional ETL was built for stable, internal databases, IngestIQ Agent is built for the dynamic, unpredictable ecosystems of the modern enterprise.

Ready to Rethink Data Ingestion?

Traditional ETL was built for a world that no longer exists. If your team is still spending 80% of their time on data preparation and only 20% on analysis, it’s time to flip the script.

Join the enterprises moving from reactive pipeline maintenance to intelligent, intent-driven orchestration.